Big Tech and AI: Opportunities, risks, and the need for responsible development

The rapid advancement of Artificial Intelligence (AI) has brought both opportunities and risks for Big Tech companies. This article explores the current landscape of Big Tech and AI, highlighting their dominance in the field, the ongoing debate over responsible development, the opportunities presented by increased automation, and the risks associated with trust, liability, and legal uncertainties.

AUTHORS

Big Tech and AI

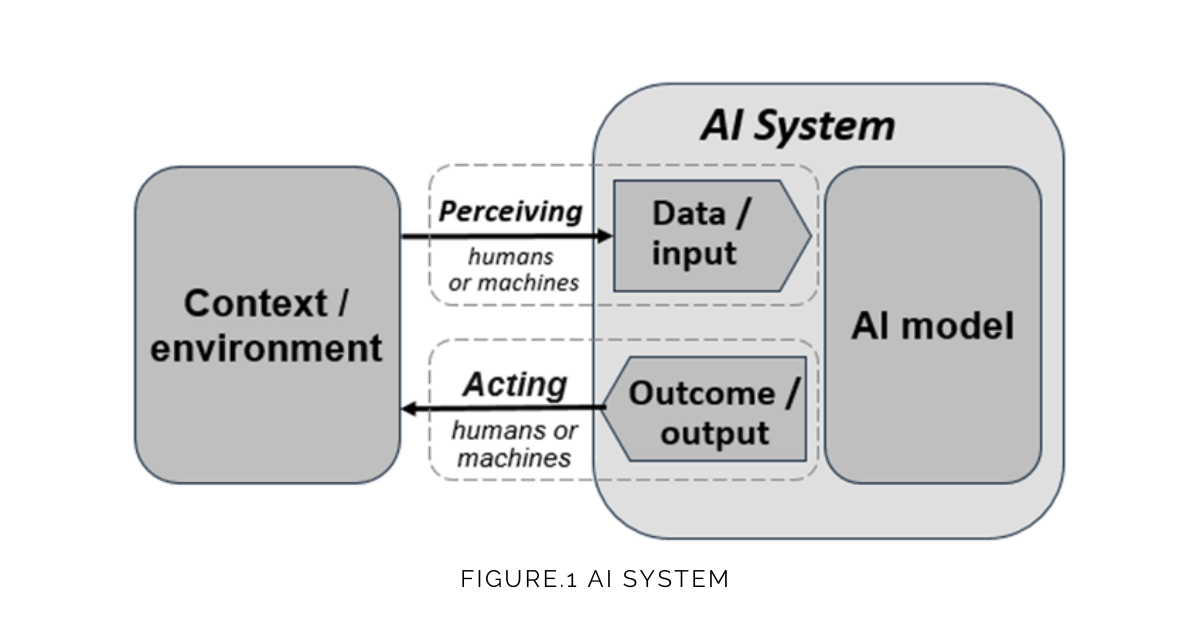

AI system

The present view on the AI system is based on the book “Artificial Intelligence: A Modern Approach“, which defines AI as “the designing and building of intelligent agents that receive precepts from the environment and take actions that affect that environment”. The system gathers raw data and inputs from the environment, analyses and interprets the data in an automated manner or manually (AI Model) by providing predictions, recommendations or decisions, and takes actions to influence the state of the environment.

Dominance in the AI field

Although current AI applications have narrow forms and are limited to their singular specialty, AI has been developed and deployed on a massive scale ranging from modern web search engines (e.g., Google Search) and recommendation systems (used by YouTube, Amazon) to self-driving car and generative tools (e.g.ChatGPT).

With access to large amounts of data and enough computing power to process it, Big Tech is at the forefront of AI. Tech Giants are going all in on AI. Companies are investing unprecedented capital into research and development ($223bn in 2022). Microsoft is a leader in venture capital and private equity investments in AI-related companies (Fig. 2). It extends its partnership with an AI research and deployment company OpenAI and invested 10 billion dollars into ChatGPT. Meanwhile, Facebook’s CEO recently said AI was his firm’s largest share of investments and Alphabet (Google) plans to disclose the size of its AI investment for the first time. Moreover, the number of job postings by Google, Meta and Microsoft requesting AI skills also keeps growing. Meanwhile, the dominance of Big Tech companies in the AI field indicates that the progress and advancements of AI technologies within the companies will have a significant influence on their market position and revenue streams.

The debate over AI development

A tonne of uncertainty around AI raises questions around the responsible development and deployment of AI technologies. Some of the biggest names in tech signed an open letter calling for a temporary halt on any further AI development. The signatories petitioned to pause for at least 6 months the training of AI systems more powerful than GPT-4 as the systems can pose profound risks and dangers to society. However, the letter sparked controversy over its claims. Critics commented that the claims are unclear and they ignore more immediate concerns about AI. The Future of Life Institute, a non-profit organisation that coordinated the effort, was accused by some experts of focusing on fictional apocalyptic scenarios over current challenges about AI.

Opportunities for Big Tech

Increased automation

AI has the potential to automate many tasks that were previously performed by humans. For example, chatbots can be used to provide customer service and support, while AI algorithms can be used to automate data analysis, financial modelling, and even programming. This increased automation has the potential to reduce costs for big tech companies, increase efficiency, and allow employees to focus on higher-level tasks.

However, as AI systems are now becoming human-competitive at general tasks, there is concern that AI could lead to job displacement. According to OpenAI research, approximately 19% of jobs have at least 50% of their tasks affected by the introduction of large language models.

Education

AI holds tremendous promise to improve our educational systems, with intelligent features being incorporated into virtual and distance learning, predictive analytic systems, and management and administration of teaching and learning. As an assistant to students, AI can be used for building personalised learning systems. Adaptive learning platforms can analyse student data, assess their strengths and weaknesses, and provide targeted content and feedback. For example, Teach to One is an AI-powered personalised learning program that individualises maths instruction for middle school students. The system uses daily assessments, student preferences, and learning objectives to create personalised schedules and tailored lessons for each student. In addition, AI technologies can enhance accessibility for students with disabilities and additional learning and support needs through the adaptation of learning materials (e.g. speech recognition, text-to-speech conversion).

AI can be an effective tool for supporting teachers. AI can streamline administrative tasks, such as grading and scheduling, allowing educators to focus more on teaching. For instance, Gradescope employs AI to automate the grading of assignments, saving time for teachers and providing faster feedback to students. Nevertheless, it is important to evaluate applications of AI in education, including considerations of potential biases, privacy and data transparency.

Healthcare

Healthcare is one of the most promising AI applications. In the industry, AI enables making medical services universal, high-quality, and affordable. AI-based solutions currently help to improve the diagnostic process for cancer and neurologic disorders. At the same time, digital technologies reduce costs through avoiding losses from low productivity and sick days and a better understanding of risk factors and selecting appropriate interventions.

The use of AI has the potential to address the most important health issues in low- and middle-income countries such as HIV/AIDS, psychiatric pathologies and maternal and child health thereby reducing global health inequalities. However, as AI systems rely on vast amounts of personal data, the potential for AI algorithms to extract sensitive information from personal data or make inferences about individuals’ private lives needs to be addressed.

Environment

Proponents of AI development highlight its potential to expedite the achievement of the sustainable development goals. For example, AI technologies can make the food system more sustainable through the automation food production processes, including transport, logistics and supply chain. It also can be used in environmental conservation. For instance, an automated alert system in a park helps to track wildlife poachers and prevent illegal practices threatening species worldwide. Moreover, AI can have a significant impact on fighting climate change. Deep learning algorithms can help to improve existing forecasting and prediction systems and better understand extreme weather events. At the same time, the introduction of AI into the climate area imposes several social and ethical challenges already associated with AI more generally.

Tech leaders address environmental challenges with AI by forming partnerships with organisations and individuals working to advance sustainability. Through the Social Good program, Google supports various projects that tackle social and environmental challenges such as the minimisation of crop damage in India and waste management in Indonesia to improving air quality in Uganda and adapting to extreme heat. While Microsoft’s AI for Earth is a 5-year $50 million initiative launched in 2017, supported by over 950 projects, organisations and researchers using AI to power sustainability applications.

Risks for Big Tech

As companies have powerful AI tools that have a tremendous influence on the public, the question arises: can Big Tech be trusted? The companies have repeatedly failed over time to act responsibly and ethically. Google, Facebook, Amazon, and Apple have been accused of breaching competition and monopoly laws, violating data protection regulations and avoiding tax liabilities. For example, Google was fined US$2.7 billion for breaching EU antitrust rules in 2017. Besides, there are more than 147 lawsuits brought against major social media platforms — Facebook, Instagram, TikTok, Snapchat and YouTube. The complaints allege the companies’ products are damaging children’s mental health because algorithms behind the platforms manipulate people to negatively change their behaviour. Meanwhile, social media companies dispute the allegations, arguing that a causal link between the rise in social media use and mental health conditions is not justified. Apart from fines, public backlash poses reputational risks for the companies, impacting their brand value and, in turn, their share prices.

Recent job cuts at Facebook and Google’s ethics and society teams prompted a wave of concern. Members of the teams are responsible for ensuring the safety of consumer products that use AI before their release. As new applications of AI are booming, ethical impact assessment is crucial to make sure the effects of AI will be positive and their risks are managed.

There is a common misconception that AI technologies never make mistakes. In fact, the probability of making mistakes by AI is high because it depends on the quality of input data that are often incomplete or inaccurate. So, who is at fault when AI makes a mistake? On the one hand, AI is machine learning, it will never work without the help of a person working behind it. A scientist or programmer is responsible for adequate datasets and the set of instructions given to AI to reduce biased decisions. Therefore, the AI system cannot hold liability for its mistakes like a legal person. The approach is illustrated by the fatal accident including Uber’s test of autonomous cars. Due to the car sensors failure, a pedestrian crossing the street was not identified, so the algorithm did not initiate the brake. The co-driver was found guilty of criminal negligence, while Uber did not face any charges.

On the other hand, it can be difficult to file lawsuits against persons without their direct link between AI mistakes and individuals (the user, programmer, owner). Some believe AI should be considered as an entity. In this case, it is rational and fair if liability falls to AI instead of individuals. As a result, some jurisdictions are starting to focus on the concept of granting legal personhood to AI systems in certain cases.

The need for responsible AI development also arises from the problem of AI control and alignment with human-compatible values. It is based on the idea that AI will eventually exceed human capabilities in most domains which in turn could cause an existential catastrophe. To guide the development of AI systems whose purposes don’t conflict with ours, Stuart Russell, a renowned AI researcher, proposes three fundamental principles for ethical AI:

- The machine’s only objective is to maximise the realisation of human preferences.

- The machine is initially uncertain about what those preferences are.

- The ultimate source of information about human preferences is human behaviour.

Furthermore, in the context of legal uncertainty and complexity surrounding future progress in AI, the OECD adopted the first intergovernmental standard on AI. It focuses on value-based principles and recommendations for policy-makers to help AI actors develop and use AI that is lawful, ethically adherent, and technically robust. The principles for responsible stewardship of trustworthiness include inclusive growth, sustainable development and well-being, human-centred values and fairness, transparency and explainability, robustness, security and safety and accountability.

Recently UNESCO also adopted the universal framework of values for AI ethics, which was signed by its 193 member countries on November 25, 2021. Recommendation on the Ethics of Artificial Intelligence includes legislative or other measures to ensure ethical implementation and governance of AI technologies. OpenAI CEO Sam Altman recently emphasised the significance of AI safety research and international cooperation and collaboration in setting regulatory standards to ensure the safe deployment of AI technologies.

Conclusion

The rapid development of AI presents both opportunities and risks for Big Tech companies. While AI offers increased automation, enhanced education, improved healthcare, and environmental benefits, responsible development and regulation are essential. Striking a balance between the benefits of AI and concerns over data governance, privacy, and public trust is crucial. Emphasising diverse and inclusive programmer groups, regular algorithm audits, and transparency can ensure ethical and fair AI development. By addressing risks and embracing responsible practices, Big Tech can harness the potential of AI while safeguarding societal well-being. It is essential for these companies to be at the forefront of responsible AI development, setting the standard for ethical practices in the industry.